Demystifying the Language Model

At first glance, ChatGPT seems like magic. You type a prompt, and it responds with eerily human-like text. But under the hood, ChatGPT isn’t mystical at all—just a clever combination of some powerful but relatively simple ingredients. How does ChatGPT work?

It Starts with a Lot of Data

Like a chef preparing a dish, ChatGPT needs ingredients. Its raw materials are text. The text comes from vast archives of digitized books, Wikipedia articles, blog posts and other online content written by humans. This gives ChatGPT hundreds of billions of words and patterns of words to learn from.

This real-world data is the bedrock on which ChatGPT builds its conversational abilities. It’s a library of examples of how actual people construct sentences, develop ideas, and communicate information. Without the words used to “train the model”, the system would have no clue how human language works.

Train the Model

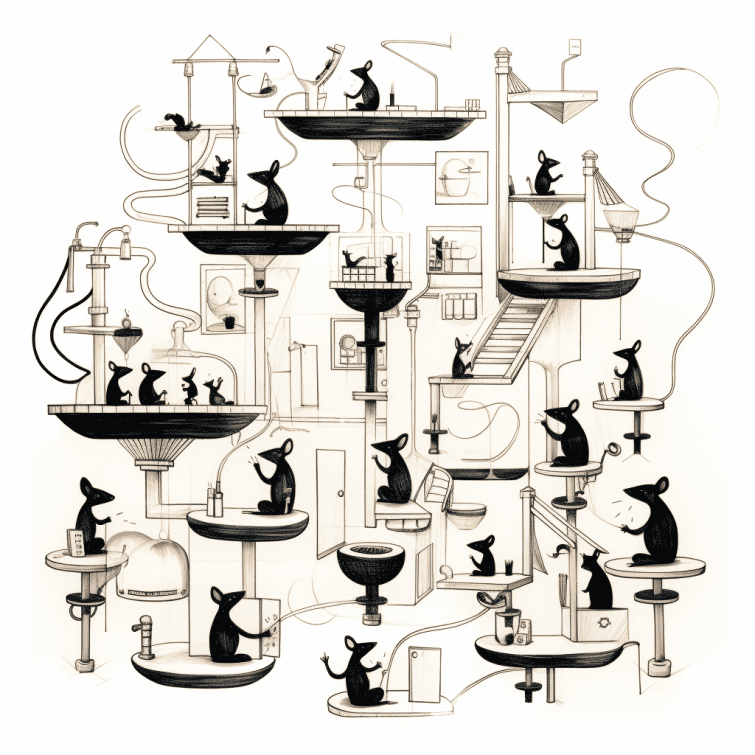

Imagine you’re learning to cook by reading a lot of recipes. The raw recipes you are reading could be considered “training data”. While you are reading, you notice repeated patterns, elements, processes, and techniques. You learn that when you bake anything, preheating an oven is necessary. As you learn, you don’t memorize every recipe. Instead, you internalize cooking skills and techniques (the learned parameters and weights). Once you’ve learned, you no longer need to refer to every recipe you ever read; you can cook effectively using your acquired skills. This is how LLMs like ChatGPT work:

- During Training: The LLM is exposed to a vast amount of data (text). During this phase, the model learns by adjusting its internal parameters (like neural network weights) to better predict or generate text based on the input it receives.

- After Training: Once the training is complete, the model retains its learned parameters – essentially, the knowledge it has gained from the data. However, it does not store the actual training data within itself.

- In Operation: When you interact with the LLM, it uses these learned parameters to understand your queries and generate responses. It’s applying what it learned during training, but it’s not looking back at the training data itself.

The LLM retains the ‘lessons learned’ from the data (in the form of model parameters), but not the data itself. This approach makes the model efficient and capable of generating or interpreting language without needing to access the original training data.

It Responds Word By Word

When ChatGPT responds to you, it has no grand plan. There’s no 1000-word essay already mapped out in its “mind.” Instead, it decides each next word one-by-one, based only on the preceding set of words.

Rinse and repeat a few hundred times, and voilà—you have a coherent passage of text!

But how does it choose each word? This is where the neural network comes in. After digesting all those books and web pages, ChatGPT has learned statistical patterns about which words tend to follow each other.

See enough sentences starting with “The cat” and you get a feel for what word should come next. Repeat across millions of such examples, and you have a pretty good model for continuing text in a human-like way.

Statistics and Pattern Recognition

Unlike old-school AI systems that relied on hard rules and logic, ChatGPT is all about pattern recognition gleaned from data. It doesn’t comprehend what it’s writing in any deep way—and has no common sense or understanding of the world beyond what’s implicit in its training data.

This learning-based approach is what gives ChatGPT its open-endedness and creativity. Armed with just the statistics of how humans write, speak, and communicate, it can improvise original text that usually passes the sniff test.

More Data = Better Performance

ChatGPT achieves its performance through sheer scale. The neural network inside has a whopping 175 billion parameters— connections that can be tuned by all that training data.

It’s analogous to the brain, just bigger. More virtual neurons allow it to ingest more examples from its textual training data and capture more complex statistical patterns.

So while the core technology behind ChatGPT is simple, its unprecedented size lets it perform linguistic feats far beyond previous systems.

Does ChatGPT Comprehend Anything?

ChatGPT has no true comprehension. It has no experience of what words mean or ability to reason about the content it generates. This limits its depth and adaptability.

Chatbots like ChatGPT are masters of producing “small talk” that mimics humans—but genuine understanding remains elusive. Their knowledge comes from statistical patterns, not grounded concepts about the world.

Yet their ability to paraphrasing human communication and respond conversationally represents an enormous advance for AI. ChatGPT foreshadows more capable systems to come as researchers make progress on the long-standing challenge of true language understanding.

So while its inner workings are straightforward in hindsight, don’t underestimate the accomplishment. ChatGPT represents a breakthrough in machines that can convincingly converse as people do—if not yet with true human wisdom.

Note: The information in this post is based on a comprehensive and example-packed article by Stephen Wolfram titled “What Is ChatGPT Doing … and Why Does It Work?”.